Modern financial market models and pricing processes usually represent price dynamics over an elementary time period as a result of two factors: deterministic instantaneous increment and random increment. The first factor includes compensation for assumed risk, as well as the effect on prices such as imitation and herding in the trader environment. The second factor includes a noise component of price dynamics with amplitude called volatility. It can also represent a systematic parameter controlled by imitation, as well as other factors. If the first factor of price formation is absent, and volatility is constant, then the second term alone creates random walk trajectories.

Elementary Markov Process

We start by considering the elementary Markov process of pricing. But before that, let’s formalize the key concepts.

- Finite stochastic process is a process with independent values if

(1) For any statement p, the truth of which depends only on the outcomes of experiments up to n,

P {f = sj|p} = P{f= sj}.

For such processes, knowledge of already observed experiment outcomes does not affect our forecast regarding the next experiment. For Markov processes, this requirement is weakened, allowing that the value of the immediately preceding outcome affects this forecast.

– Finite Markov process is a finite stochastic process such that

(2) For any statement p, the truth of which depends only on the outcomes of experiments up to n,

P {f = sj|(f-1=si) ^ p} = P{f= sj| f-1= si }.

(it is assumed that f-1= si and p are compatible).

The condition (2) is called Markov property. Sometimes “Markov process” refers to a stochastic process with continuous time satisfying the Markov property, and the object defined in (2) is called Markov chain with a finite number of states.

– Finite Markov chain is a finite Markov process for which transition probabilities pij(n) do not depend on n.

It is worth remembering that transition probabilities at the n-th step, usually denoted as pij(n), are

pij(n) = P[f= sj| f-1= si].

A Markov chain can be imagined as a process that moves from state to state. It starts with probability pij(0) in si. If at some point it is in state si, then on the next “step” it goes to si with probability pij. Initial probabilities can be understood as the probabilities of a particular possible “start”.

The initial probability vector together with the transition matrix fully define the Markov chain as a stochastic process, since they are sufficient to build the full measure on the tree (branching of outcomes). Transition matrix of the Markov chain is a matrix P with elements pij. The vector of initial probabilities (or initial distribution) is a vector π0 = {pj(0)} = { P[ f0= sj]}.

Therefore, if a certain probability vector π0 and a certain probability matrix P are given, there will be a unique Markov chain (up to transformation of states) for which π0 – initial distribution, and P – transition matrix.

Examples of Markov Process Operation

To better understand what we’re talking about, consider two illustrative examples.

1. Market Diagnosis.

Suppose the market has not been rising for two consecutive days. If the market is bullish, then the next day it will either be flat or a price decline with equal probability. If it is flat (or a price decline is observed), then with the same probability the market will either remain the same the next day or change. If it changes, then in half of the cases, the next day will be a price increase.

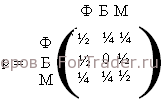

Under these conditions, the market is convenient to represent as a Markov chain with three states: S = {flat (F), bullish (B), bearish (M)} and transition matrix

2. Finite price movement of an asset. The currency rate begins to randomly wander in a price corridor between support (S) and resistance (R). The initial price level is somewhere in the middle between S and R. The price tends to rise, i.e., to reach the resistance level. The distance in points from S to R is marked with integers from 0 to n; the initial price level corresponds to the number i, located between 0 and n and being the initial state.

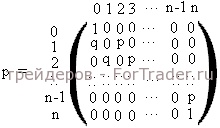

The market makes a “step” towards growth (to state n) with probability p or a step down (to state 0) with probability q = 1 – p.

States 0 and n are absorbing. We assume that p is not equal to 0 and p is not equal to 1. In this case, the transition matrix is

Now let’s move on to examining the example on the foreign exchange market.

Example of Markov Chains Formed from Data on Nonfarm Payrolls in the USA

Since, as we mentioned above, initial probabilities can be understood as the probabilities of a particular possible “start”, taking this into account, the elementary Markov process of pricing (or the elementary Markov chain) can be considered as an “instantaneous” market response to external influence (the release of important news) with the direction of price movement of the asset according to the differential between the released number and the one expected.

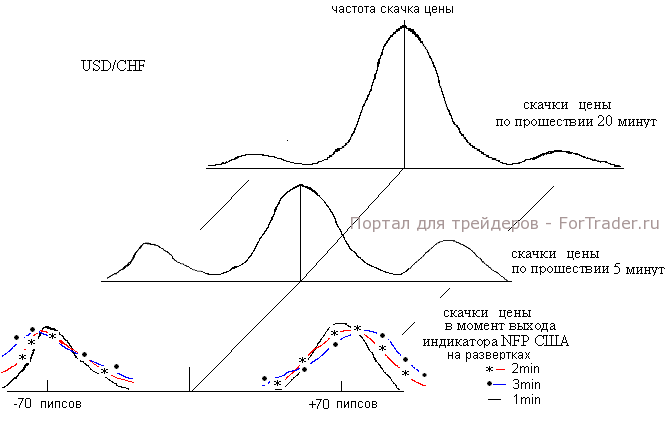

For example, on April 1, 2005, a significant economic indicator <a title=”nonfarm payrolls” href=”https://fortraders.org/b

FAQ

What is a Markov process in trading?

A Markov process in trading is a stochastic model where future price movements depend only on the current state, not on past events.

How do Markov chains apply to financial markets?

Markov chains model market states and transitions between them, such as bullish, bearish, or flat, based on probabilities of price changes.

What factors influence price dynamics in a Markov process?

Price dynamics are influenced by deterministic factors like risk compensation and trader behavior, as well as random factors like volatility and market noise.